OpenClaw Extended: Paperclip Comparisons and Claude Code Plugins

An updated OpenClaw operations guide covering gateway structure, plugin and skill usage, paperclip comparison frames, and how Claude Code differs in practice.

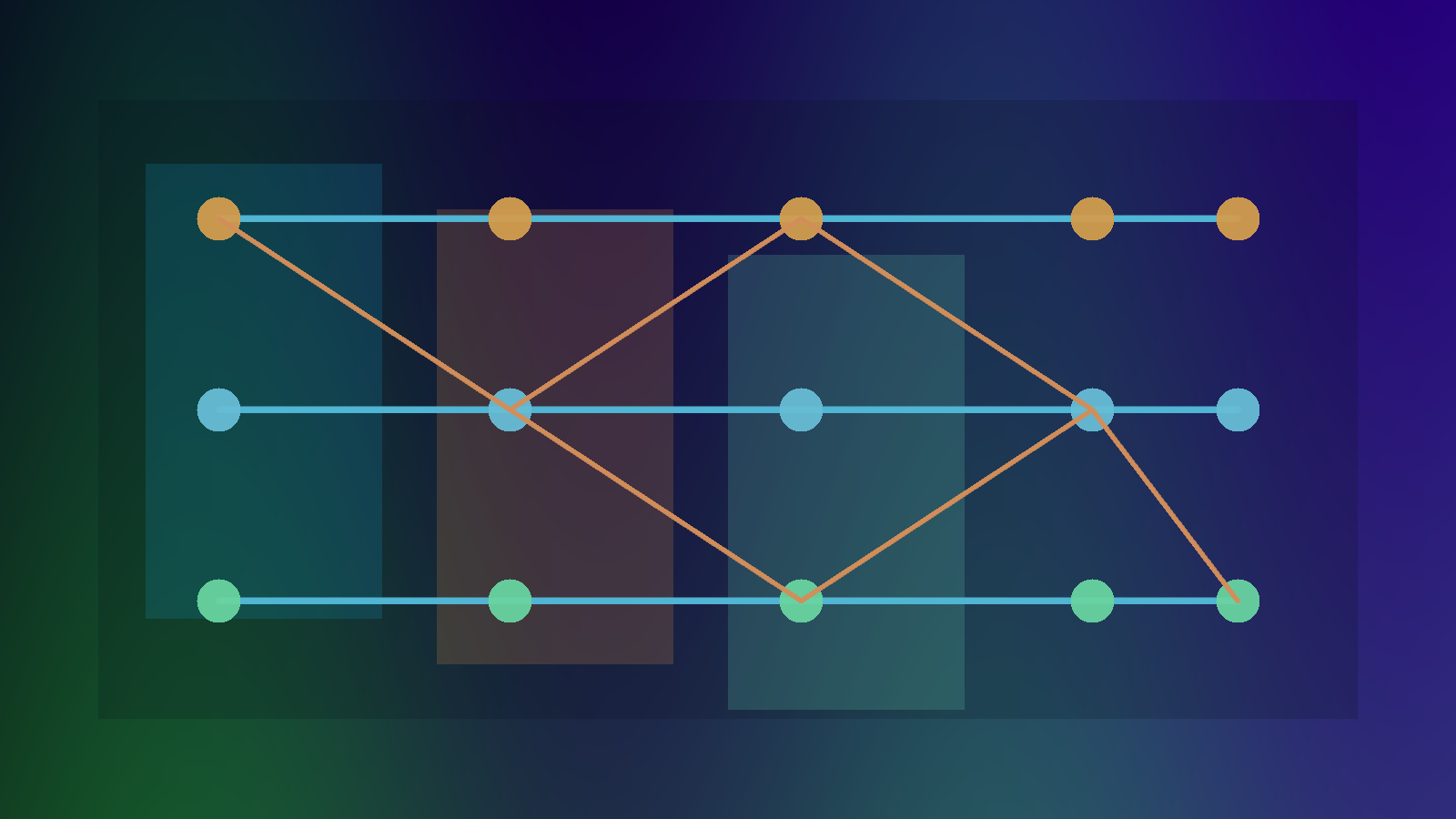

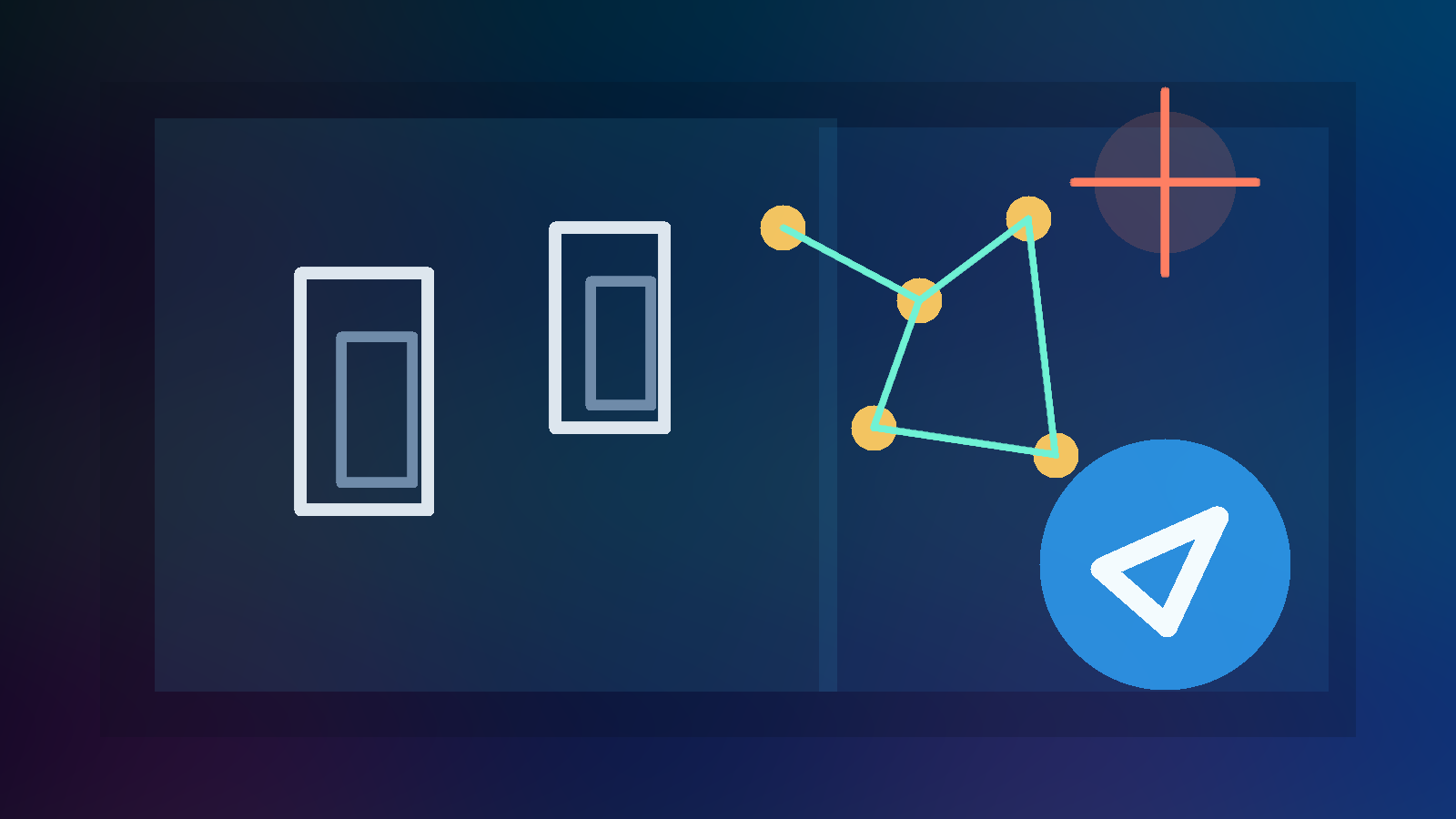

- OpenClaw plugin and skill examples

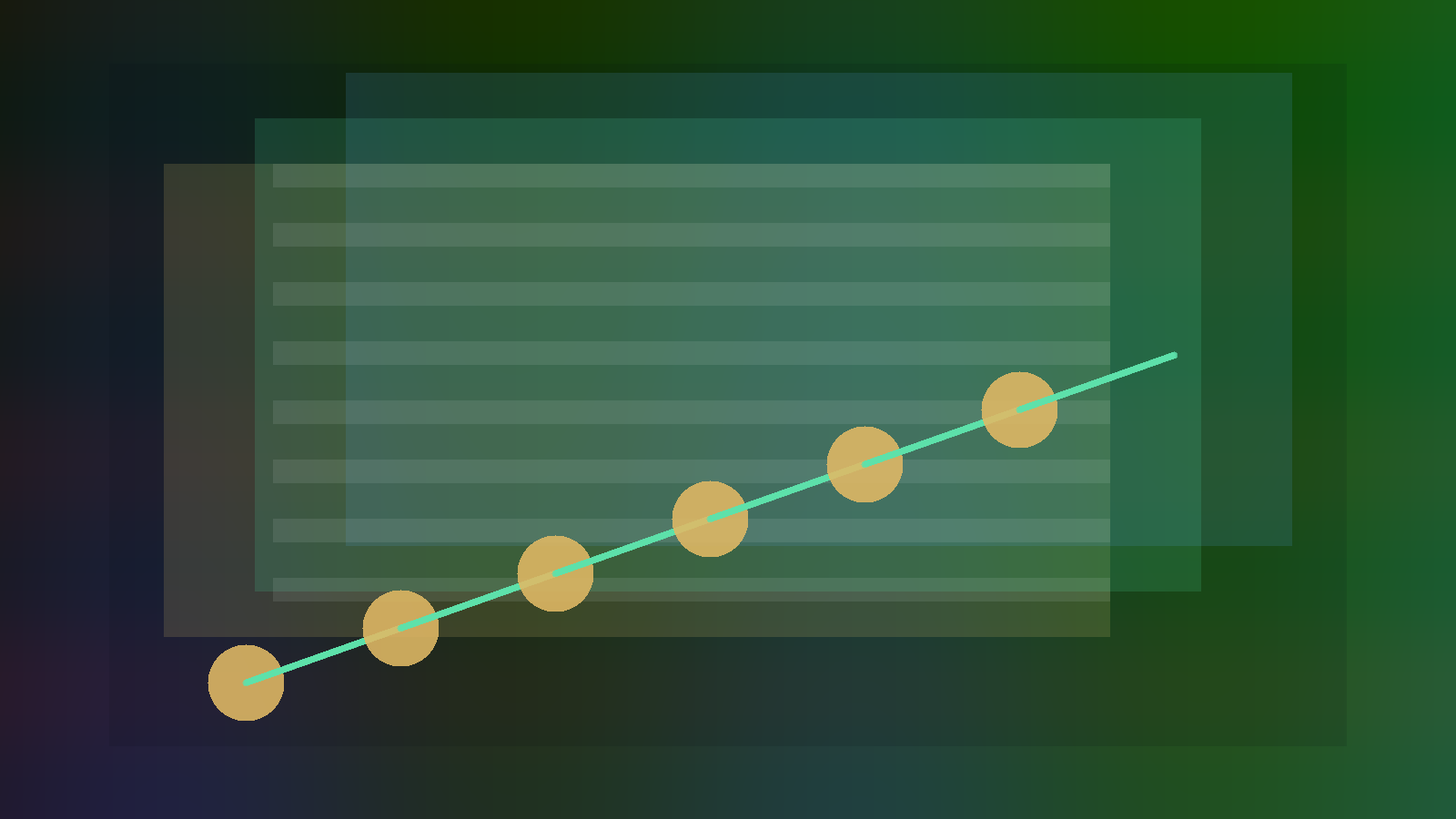

- Two paperclip comparison scenarios

- Claude Code workflow contrast